Death of Chinese Open-Weight AI Exaggerated

Three big drops in a day: DeepSeek, Kimi, Qwen

In recent days I’ve been thinking about the release of DeepSeek’s much-delayed new model V4 as a test of two big questions about China’s large language model ecosystem:

Is China turning the corner away from a norm where many of the most advanced LLMs are released openly and available to the world for download, deployment, and adaptation?

Will Chinese open-weight models continue to be fast followers of US closed models in terms of capability?

Today I think it’s clear that the answer to the first question is no: Rumors of the death of Chinese open-weight LLM advancement have been greatly exaggerated.

As for the second question, I think we need more data from the community to answer fully, but at minimum, we don’t seem to be observing a sudden stall in Chinese model metrics you might expect if chip controls were biting hard.

Busy day

In April 2026, Chinese open-weight AI development is alive and well.

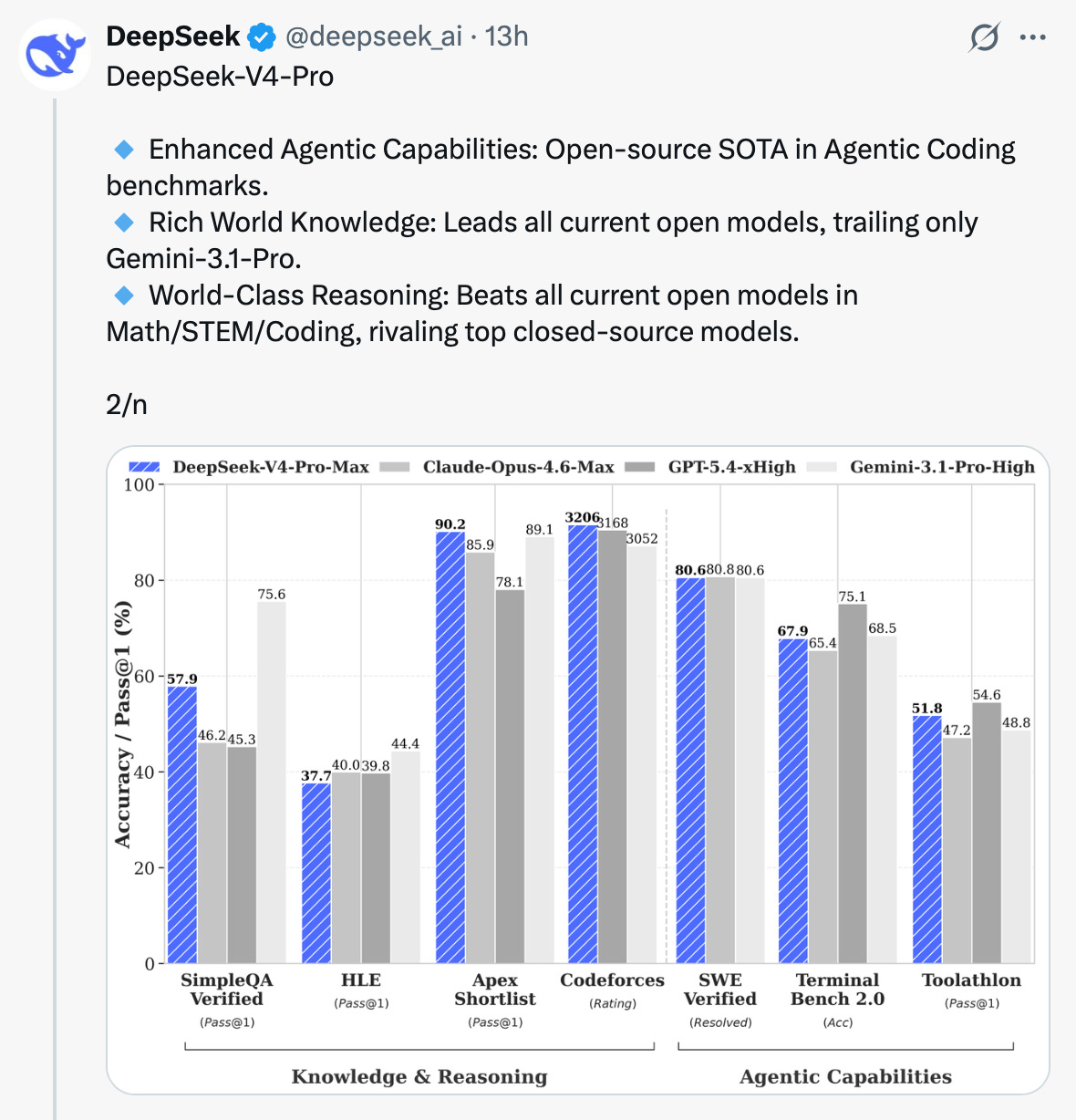

A few hours ago DeepSeek released its much-awaited V4 model, sharing it under the MIT License, effectively as open as it goes. According to the company, the DeepSeek-V4-Pro variant at maximum effort now leads the open-weight ecosystem and rivals closed leaders on a number of metrics.

Not to be outdone, Moonshot AI also in the last few hours released its latest offering, Kimi K2.6, on a modified MIT license — also reporting incremental gains in performance. Anecdotal conversations suggest Kimi models have been popular with agentic and code-assist users looking for less expensive options compared with the closed US leaders.

Even Alibaba is still in the open-weight conversation, releasing a version of its Qwen 3.6 also in the last few hours.

We’ll see soon enough how all these perform in various workflows, and how they stack up in price-to-performance calculations.

The open-weight question

There truly was reason to suspect that the pattern of Chinese labs releasing their highest-performing models could be coming to an end. Alibaba’s move to keep its new marquee offering, Qwen 3.6 Plus, closed was a break for China’s cloud leader, which was releasing open models even before DeepSeek made it cool.1 This could have been a harbinger of a shift in business models or backroom regulatory pressure, or—as seems to be the case—it could be one company’s change in strategy.

There were vibes-based reasons to wonder whether China’s government, which generally tries to keep the domestic cyberspace environment well supervised, might now view highly capable open-weight models as an unacceptable risk. After much-publicized adoption of the OpenClaw agentic system, which can behave unpredictably, authorities reportedly instructed government officials not to raise their lobsters on work machines. The publicity around Anthropic’s Mythos model and its purported transformative cybersecurity capabilities fuels worries around the world that increasingly advanced models could empower malicious actors.

Did hobbling China work?

As I say, I think the jury is still out about whether chip controls are biting. Proponents of the Biden-era policy, who would generally have favored increasingly tight controls, have been alarmed by the Trump administration’s approach. It’s not clear just how much cutting-edge GPUs are getting through. And it’s not clear how much of the training for these models was done domestically on US-controlled hardware vs. domestically on Huawei chips vs. internationally in data centers not covered by export controls.

I started to hear that DeepSeek V4’s release was imminent at the end of 2025. Then I heard it was coming by the end of the month in at least two previous months this year. This time, it’s out, but whatever caused the delays may indeed have to do with computational power. Judging the implications here on a US-China strategic lens will require better understanding of the eventual product versus what the US labs are able to put out in this interval with their unencumbered access to NVIDIA’s latest.

Agree? Disagree? Let me know.

Programming note: In February I promised monthly posts; underestimating the mental load of travel and teaching, I did not deliver in March. So I owe you all—and myself—another. Stand by!

About Here It Comes

Here it Comes is written by me, Graham Webster, a lecturer and research scholar at the Stanford Program on Geopolitics, Technology, and Governance, and editor-in-chief of the DigiChina Project. It is the successor to my earlier newsletter efforts U.S.–China Week and Transpacifica. Here It Comes is an exploration of the onslaught of interactions between US-China relations, technology, and climate change. The opinions expressed here are my own, and I reserve the right to change my mind.

See table in my article with Stanford colleagues looking at China’s open-weight LLM ecosystem as of December. https://hai.stanford.edu/assets/files/hai-digichina-issue-brief-beyond-deepseek-chinas-diverse-open-weight-ai-ecosystem-policy-implications.pdf